The mathematical exploration of vectors, vector spaces, and linear mappings denoted by matrices is known as Linear Algebra. It furnishes the fundamental structure for resolving sets of linear equations and examining geometric forms. Individuals getting ready for the RPSC Assistant Professor Maths Syllabus need to thoroughly grasp these ideas to tackle intricate RPSC Past Examination problems proficiently.

Understanding Vector Spaces and Subspaces

Vector Spaces constitute the core framework of Linear Algebra. A vector space V above a field F is composed of a collection of entities termed vectors that adhere to explicit postulates concerning addition and multiplication by scalars. You must verify ten axioms including associativity, commutativity, and the existence of an additive identity to confirm a set is a vector space.

Subspaces are subsets of a vector space that remain vector spaces under the same operations in Linear Algebra. A subset W is a subspace if the zero vector is in W, and W is closed under addition and scalar multiplication. For example, the set of all vectors (x, y, 0) is a subspace of R3. RPSC Previous Year Questions (PYQs) often require you to identify whether a given set satisfies these closure properties.

Linear Algebra builds upon these frameworks to establish more intricate processes. As you examine the RPSC Assistant Professor Maths Syllabus, give attention to the characteristics of Rn and spaces of functions. These illustrations demonstrate how theoretical postulates relate to tangible mathematical entities. Grasping the common elements and combination of subspaces is likewise crucial for competitive assessments.

Linear Dependence and Independence of Vectors

Linear dependence and independence determine the redundancy within a set of vectors in Linear Algebra. A set of vectors {v1, v2, …, vn is linearly independent if the equation c1v1 + c2v2 + …+ cnvn = 0 only has the trivial solution where every scalar ci is zero. If a non-zero solution exists, the vectors are linearly dependent.

Consider the vectors v1 = (1, 0) and v2 = (0, 1). Since c1(1, 0) + c2(0, 1) = (0, 0) implies c1 = 0 and c2 = 0, these vectors are linearly independent. If you add v3 = (2, 3), the set becomes dependent because v3 = 2v1 + 3v2. This concept is central to the RPSC Assistant Professor Maths Syllabus because it defines the efficiency of a coordinate system.

In exam scenarios, you test for independence by placing vectors into a matrix and calculating the determinant or rank. A non-zero determinant for a square matrix signals independence. Linear Algebra questions in RPSC’s Prior Year Queries (PYQs) often leverage this connection to assess your swiftness and precision in matrix simplification.

Bases and Dimensions in Vector Spaces

A basis constitutes a collection of independent vectors that span the whole vector space. The count of vectors within any basis for a space is termed the dimension. For example, the usual basis for R3 is {(1,0,0), (0,1,0), (0,0,1)}, so its dimension is three. Each vector in the space possesses a unique expression as a linear combination of the basis vectors.

The RPSC Assistant Professor Maths Syllabus highlights the Exchange Lemma and the Basis Extension Theorem. These principles demonstrate that any linearly independent collection can be expanded to constitute a basis. For any subspace W contained within V, the dimensionality of W will invariably be no greater than the dimensionality of V.

To determine the size of the combination of two vector spaces, W1 and W2, one utilizes the relationship: dim(W1 + W2) = dim(W1) + dim(W2) – dim(W1 ∩ W2). This particular equation surfaces frequently in prior examination questions for the RPSC. Becoming proficient with changing bases enables simplification of tasks by selecting the most advantageous coordinate framework for any specified linear transformation.

Core Topics in the Linear Algebra Syllabus

The following table outlines the essential components of the Linear Algebra curriculum for advanced competitive examinations.

| Category | Key Concepts |

|---|---|

| Structural Foundations | Vector Spaces, Subspaces, Basis, and Dimension |

| Mappings | Linear Transformations, Rank-Nullity Theorem, Change of Bases |

| Matrix Theory | Algebra of Matrices, Rank of Matrix, Canonical Forms |

| Spectral Theory | Eigenvalues and Eigenvectors, Cayley-Hamilton Theorem |

| Advanced Forms | Inner Product Spaces, Quadratic Forms, Jordan Forms |

Linear Transformations and Matrix Representation

Maps that keep addition and scalar multiplication intact between vector spaces are called linear transformations. A mapping T: V →W is linear provided that T(u + v) equals T(u) + T(v) and T(cu) equals cT(u). Any linear mapping connecting spaces with finite dimensions can be expressed via a matrix. This connection joining geometry and algebra forms a fundamental element of Linear Algebra.

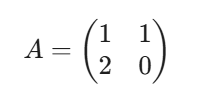

To find the matrix representation of T, apply the transformation to the basis vectors of V and express the results as coordinates in the basis of W. If T(x, y) = (x + y, 2x), the matrix relative to the standard basis is:

This conversion allows you to use matrix multiplication to calculate transformations in Linear Algebra.

A mastery of polynomial factorization is necessary for the RPSC Assistant Professor Maths Syllabus, particularly when dealing with these types of functions. The Rank-Nullity Theorem asserts that the dimension of the image plus the dimension of the kernel equals the dimension of the domain space rank(T) + nullity(T) = dim(V). Applying this principle provides a useful cross-check when working through exercises from past RPSC exams (PYQs). Transforming coordinate systems requires the use of a non-singular matrix P so that the equivalent representation is B = P-1AP.

Eigenvalues and Eigenvectors in Matrix Algebra

Eigenvalues and Eigenvectors characterize the scaling amounts and fixed directions associated with a linear transformation. For a square matrix A, a value λ qualifies as an eigenvalue if there is a non-zero vector v satisfying Av = λv. These values are determined by solving the characteristic equation det(A – λI) = 0.

Linear Algebra uses these values to decompose complex matrices. For a matrix A =  , the characteristic equation is (4-λ)(3-λ) – 2 = 0, leading to λ2 – 7λ + 10 = 0. The roots are λ= 5 and λ = 2. Finding the corresponding eigenvectors involves solving the system (A – λI)v = 0 for each λ.

, the characteristic equation is (4-λ)(3-λ) – 2 = 0, leading to λ2 – 7λ + 10 = 0. The roots are λ= 5 and λ = 2. Finding the corresponding eigenvectors involves solving the system (A – λI)v = 0 for each λ.

The RPSC Assistant Professor Maths Syllabus incorporates the characteristics of eigenvalues, including how their total matches the matrix’s trace and their product equals the determinant. Past RPSC exam questions (PYQs) frequently assess these quick methods to aid time management in the test. Knowledge of both algebraic and geometric multiplicity is furthermore essential for establishing whether a matrix can be diagonalized.

Cayley-Hamilton Theorem problems and Applications

The Cayley-Hamilton Theorem states that every square matrix satisfies its own characteristic equation. If the characteristic polynomial of A is pλ = det(A – λI), then p(A) = 0. This theorem is a vital tool for calculating high powers of matrices and finding matrix inverses without using the adjugate method.

Cayley-Hamilton Theorem problems often ask you to express A-1 or An as a polynomial in A of degree less than the dimension of the matrix. For a 2 × 2 matrix with characteristic equation λ2 – 5λ + 6 = 0, the theorem gives A2 – 5A + 6I = 0. Multiplying by A-1 yields A – 5I + 6A-1 = 0, so A-1 = 1/6 (5I – A).

You will find Cayley-Hamilton Theorem problems throughout the RPSC Previous Year Questions (PYQs). These problems test your ability to manipulate matrix equations. The theorem also provides a pathway to minimal polynomials. A minimal polynomial is the lowest degree polynomial that annihilates the matrix, and it shares the same roots as the characteristic polynomial.

Inner Product Spaces and Orthonormal Bases

In Linear Algebra, an orthonormal basis simplifies the projection of vectors and the representation of transformations. For any vector v, its coordinates in an orthonormal basis {u1, …, un} are simply the inner products 〈v, ui 〉. This efficiency is why the RPSC Assistant Professor Maths Syllabus includes inner product properties and the Cauchy-Schwarz inequality.

RPSC Past Exam Questions (PYQs) frequently require determining the orthogonal complement of a vector space or utilizing the Riesz Representation Theorem. Familiarity with complex inner products, particularly the conjugate symmetry characteristic, is necessary. Proficiency in these areas is crucial before tackling self-adjoint and unitary operators.

Canonical Forms and Matrix Diagonalization

Standard forms offer a more straightforward, universally accepted depiction of a matrix when transforming the basis. Transforming to a diagonal matrix involves determining one that is similar to a given matrix A. This can only be achieved if A possesses a full set of linearly independent eigenvectors. If transforming to a diagonal form isn’t feasible, the Jordan Canonical Form is employed instead.

The Jordan form constitutes a block-diagonal matrix, with each segment relating to a specific eigenvalue. This arrangement proves beneficial when solving sets of linear differential equations in Linear Algebra. The RPSC Assistant Professor Maths Syllabus mandates recognizing Triangular, Diagonal, and Jordan forms by examining the minimal and characteristic polynomials.

Rank of Matrix plays a significant role in determining these forms. The rank is the dimension of the row space or column space. RPSC Previous Year Questions (PYQs) frequently ask you to find the rank using elementary row operations or the echelon form. A matrix is diagonalizable if and only if the minimal polynomial consists of distinct linear factors.

Quadratic Forms and Their Reduction

Quadratic expressions are uniform polynomials whose degree is two, for example Q(x, y) = ax2 + bxy + cy2. Within Linear Algebra, one expresses these forms through the use of symmetric matrices. Simplifying quadratic expressions entails a transformation of variables intended to remove mixed terms, leading to a combination of squared terms. This procedure is commonly referred to as diagonalizing symmetric matrices.

The categorization of quadratic forms hinges upon the signs of their eigenvalues. A form achieves positive definiteness when every eigenvalue carries a positive sign. If the signs exhibit variation, the form is termed semidefinite or indefinite. The RPSC Assistant Professor Maths Syllabus emphasizes Sylvester’s Law of Inertia, asserting that the count of positive, negative, and null coefficients in the simplified form remains constant.

RPSC Previous Year Questions (PYQs) often present a quadratic form and ask for its rank, index, or signature. For example, f(x, y, z) = x2 + 2y2 – z2 has a signature based on the signs (+, +, -). You must use congruent transformations or eigenvalue analysis to reduce these forms effectively. This section of Linear Algebra connects directly to geometry and optimization.

Limitations of Standard Linear Algebra Methods

Although Linear Algebra techniques are strong, they encounter issues with numerical steadiness and the effort of computation. A common student assumption is that finding the determinant offers the optimal path to verify matrix invertibility. Yet, for sizable matrices, determinant computation consumes significant resources and is susceptible to inaccuracies from rounding. Estimating the Numerical Rank of a Matrix generally proves more dependable in practical scenarios.

These matrices are termed defective. In these instances, typical diagonalization is unsuccessful, and you should employ the Jordan Canonical Form instead. Grasping these limitations avoids mistakes when utilizing the RPSC Assistant Professor Maths Syllabus for intricate scenarios.

Within past exam questions from the RPSC, you may encounter scenarios involving matrices that are close to singular in Linear Algebra. Minor shifts in input data can cause vastly divergent outcomes in these poorly conditioned setups. Knowing when to opt for singular value decomposition rather than conventional eigenvalue decomposition distinguishes a highly skilled professional.

Practical Application: Image Compression and Data Science

Linear Algebra is key to contemporary image compression methods via Singular Value Decomposition (SVD). An image is fundamentally a large array of pixel intensities. Applying SVD breaks down this array into U ∑ VT transpose, where ∑ holds the singular values, ranked from greatest to least. Retaining just the biggest singular values allows the image to be represented with considerably reduced information.

In data science, Principal Component Analysis (PCA) uses Eigenvalues and Eigenvectors to reduce the dimensionality of large datasets. This process identifies the directions of maximum variance. When you study the RPSC Assistant Professor Maths Syllabus, remember that these abstract concepts are the engines behind facial recognition, search engine ranking, and recommendation systems.

The Rank of Matrix identifies the number of independent features in a dataset in Linear Algebra. When a set of data contains superfluous details, the matrix describing it will exhibit a smaller rank than its size implies. Working through past examination questions (PYQs) on rank and nullity for the RPSC sharpens the understanding required for managing expansive datasets effectively. Linear Algebra continues to be the most relevant field of mathematics in contemporary times.

Conclusion

To truly conquer the broad domain of Linear Algebra, one needs a calculated equilibrium between abstract concepts and diligent computation. By concentrating on the inherent structure within vector spaces and the practical strength of matrix operations, you establish the essential analytical groundwork for the RPSC Assistant Professor selection process. VedPrep offers the focused instruction and organized materials needed to approach this challenging curriculum assuredly. Regular engagement with intricate transformations and eigenvalue problems guarantees readiness for any technical hurdle encountered in the competitive academic setting.

To know more in details from our faculty, watch our Youtube video:

Frequently Asked Questions (FAQs)

What is the difference between linear dependence and independence?

Linear independence occurs when no vector in a set is a linear combination of others. The only solution to their linear combination equaling zero is when all coefficients are zero. In contrast, linear dependence means at least one vector is redundant. You can check this by calculating the matrix determinant.

What are linear transformations in the RPSC syllabus?

Linear transformations are functions between vector spaces that preserve the operations of addition and scalar multiplication. You can represent these transformations as matrices to perform complex calculations. These mappings are essential for understanding how geometric shapes rotate, scale, or shear within a defined coordinate system.

What is the significance of the rank of a matrix?

The rank represents the number of linearly independent rows or columns in a matrix. It indicates the dimension of the image of the linear transformation associated with that matrix. Finding the rank is a standard requirement in RPSC Previous Year Questions to determine if a system has solutions.

How does the Cayley-Hamilton Theorem apply to square matrices?

This theorem states that every square matrix satisfies its own characteristic equation. If you know the characteristic polynomial of a matrix, you can replace the variable with the matrix itself to get a zero matrix. This property simplifies the process of finding matrix inverses and high powers.

What is the procedure for the Gram-Schmidt process?

The Gram-Schmidt process converts a set of linearly independent vectors into an orthonormal basis. You subtract the projections of a vector onto previous orthogonal vectors to ensure 90 degree angles. Final normalization involves dividing each vector by its length to achieve a magnitude of one.

How do you reduce a quadratic form to its canonical form?

Reduction involves a change of variables to eliminate cross product terms, leaving only squared terms. You typically use orthogonal transformations or Lagrange's method of squaring. The resulting form reveals the rank, index, and signature, which are common metrics in RPSC competitive mathematics.

How do you find the matrix representation of a linear transformation?

Apply the transformation to each basis vector of the domain space. Express these results as coordinates relative to the basis of the target space. Place these coordinate vectors as columns in a matrix. This matrix allows you to perform the transformation using standard matrix multiplication.

Why does matrix diagonalization sometimes fail?

Diagonalization fails if a matrix is defective, meaning it lacks enough linearly independent eigenvectors. This happens when the geometric multiplicity of an eigenvalue is less than its algebraic multiplicity. In such instances, you must use the Jordan Canonical Form as a substitute for a diagonal matrix.

What should you do if the determinant of a matrix is zero?

A zero determinant indicates that the matrix is singular and does not have an inverse. It also means the rows or columns are linearly dependent. In the context of the RPSC syllabus, this implies the linear transformation collapses the space into a lower dimension.

What causes errors in Cayley-Hamilton Theorem problems?

Common errors involve sign mistakes during the expansion of the determinant or forgetting to multiply the constant term by the identity matrix. Always verify that the highest power of the matrix matches the dimension of the matrix. These checks ensure accuracy in RPSC competitive mathematics.

When is the Jordan Canonical Form necessary?

The Jordan Canonical Form is necessary for non-diagonalizable matrices. It provides a nearly diagonal structure with ones on the super-diagonal for repeated eigenvalues. This form is the most simplified representation possible for any linear operator on a finite-dimensional complex vector space.

What is the difference between an inner product and a dot product?

A dot product is a specific type of inner product used in Euclidean space. An inner product is a more general concept that can be defined for any vector space, including function spaces. Both allow you to measure lengths and angles within the mathematical structure.

How do you classify a quadratic form as positive definite?

A quadratic form is positive definite if all its eigenvalues are strictly positive. Alternatively, you can check if all leading principal minors of its symmetric matrix are positive. This classification determines the shape of the associated geometric surface and is vital for optimization.

What is the minimal polynomial of a matrix?

The minimal polynomial is the unique polynomial of lowest degree that annihilates a matrix. While it shares the same roots as the characteristic polynomial, the powers of the factors might be lower. A matrix is diagonalizable if and only if its minimal polynomial has distinct linear factors.